Native GPU Vs Colaboratory GPU and TPU

2020 Update: I have rewritten the notebooks with the newer version of TensorFlow, added other frameworks and hyperparameter tuners.Read the post here

First off, my intention is not to compare direct hardware performance but rather answer the question that originated from the recent announcement about TPU availability on Colab: “Which notebook platform should I use to train my neural network ?”

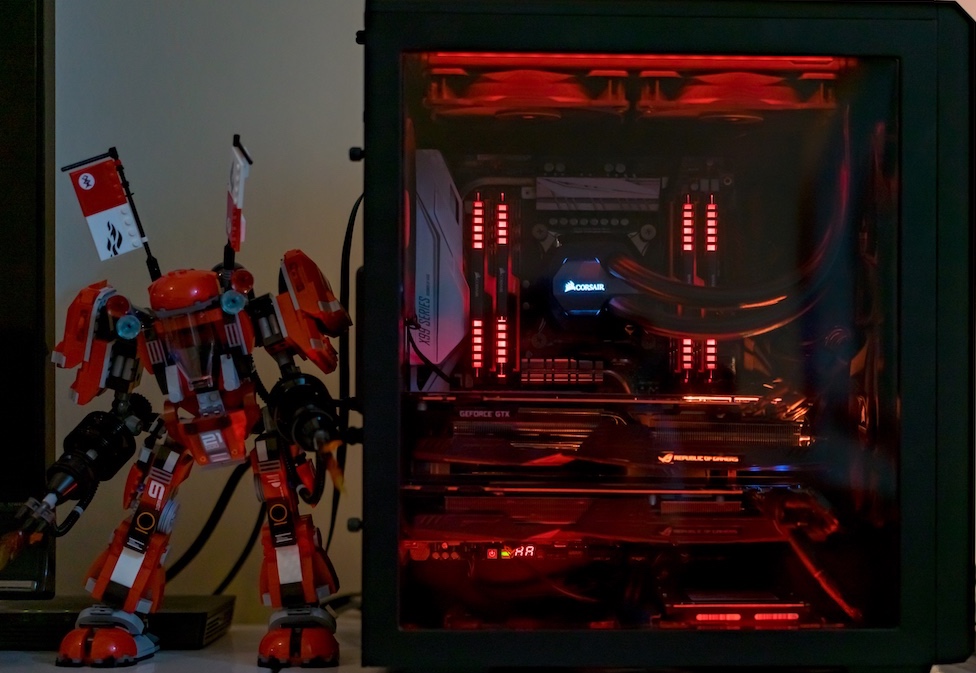

The options available to me were: Jupyter lab/notebook hosted locally with Nvidia GTX 1080Ti, Google Colaboratory using GPU accelerator and Colaboratory using TPU accelerator.

But, why GPU or TPU? why not use CPU?

The article from Google provides a good introduction. I have summarized it below in tabular format.

| Processor | Usecase | ALU | Matrix Multiplication |

|---|---|---|---|

| CPU | Designed for general purpose computing | <40 | Performs matrix multiplication sequentially, storing calculation results in memory |

| GPU | Designed for gaming but still general purpose computing | 4k-5k | Performs matrix multiplication in parallel but still stores calculation result in memory |

| TPU v2 | Designed as matrix processor, cannot be used for general purpose computing | 32,768 | Does not require memory access at all, smaller footprint and lower power consumption |

Results

In order to do some quick tests, I created a simple conv net to classify CIFAR 10 images. The network was trained for 25 epochs on all three platforms keeping the network architecture, batch size and hyperparams constant.

| Notebook | Training time (seconds) |

|---|---|

| NVIDIA 1080Ti Local | 32 |

| Colab GPU (Tesla K80) | 86 |

| Colab TPU | 136 |

Again, this is not a fair comparison because the notebook perhaps doesn’t use the code optimized for the target hardware, neither does it account for hardware differences between the local vs cloud VMs. Using a local workstation with good NVIDIA GPU works best but with Colab we are free from the troubles of cuda installations/upgrades, environment management or package management (at least for quick experimentation).

I wanted to find out whether I’ll get any benefits if I just took my notebook from the local machine to Colab. If I am using my laptop, that has AMD GPU, the answer is yes I would definitely use Colab with GPU acceleration. My code will run as is, without needing any wrappers. TPU Accelerator, on the other hand does require wrapping the model around contrib.tpu and does not seem to support eager mode yet. But I expect these to go away as TPU moves from contrib into the core.

Even when I am using my native GPU(s), the accessibility to Colab gives me the option to use Cloud GPU/TPU during times when the native GPU is busy training other networks.

Conclusion

Google Colaboratory provides an excellent infrastructure “freely” to anyone with an internet connection. All you need is a laptop, desktop or even a piTop (haven’t tried it) and you get instant access to a GPU or TPU. As Károly from two minute paper says “What a time to be alive”.

REFERENCES

[1] Good resource on the why GPU and TPU are better than CPU [link]

[2] Two minute papers [link]

[3] Code for this post [link]