Neural Text Generation

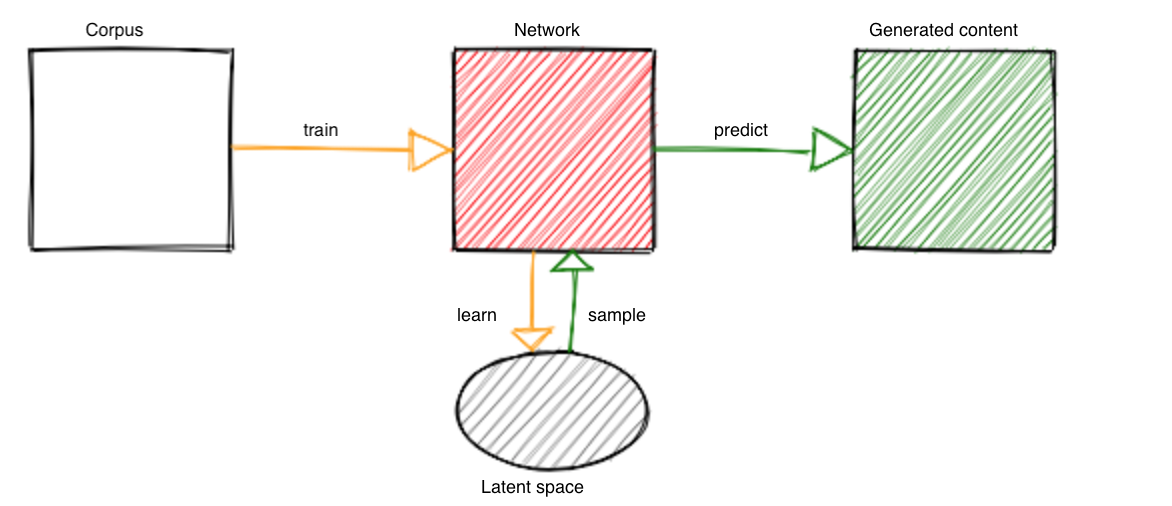

This post explores character-based language modeling using Long Short Term Memory (LSTM) networks. The network is trained on a corpus of choice such as movie scripts, novels, etc., to learn the latent space. The latent space is sampled to generate new text. The network is trained by passing a sequence of characters, one at a time, and asking the network to predict the next character. Initially, the network predictions are random, but it learns to correct its mistakes and gets better at generating words and even sentences that appear meaningful. The network also learns the dataset-specific characteristics such as opening and closing the brackets, adding punctuations and hyphens, etc.

The rest of the article explores network trained on various dataset.

Name Generator

We trained an LSTM network on superhero names to generate new character names. The network learned patterns in superhero naming conventions and can generate plausible new names.

Joke Generation

We trained a model on a dataset of jokes to see if it could learn to generate humorous content.

TED Talk Generation

Another experiment involved training on TED talk transcripts to generate speech-like text.

Conclusion

The models above had to learn the characters/letters, put them together to form words and construct sentences. It may appear that these models understand what they are generating/creating but that is not the case. What they have learned is the statistical model of the language (dataset) and they are merely sampling from it.

On their own, the models may not be of much use but they could serve as tools to augment writers. Something like a smart editor that can autocomplete the sentence or show possible options (sampled from the latent space). The models could serve as autocomplete plugins (e.g genre based language models, models trained on specific datasets such as speeches, movie scripts etc). There could possibly be a marketplace/plugin store to host pre-trained language models.

PS: For those “forcing” their bots to read/write/watch, just ask them nicely and they’ll oblige else they might get back at you with a powerful tensor exception

REFERENCES

[1] The Unreasonable Effectiveness of Recurrent Neural Networks by Andrej Karpathy [Link]